Introduction

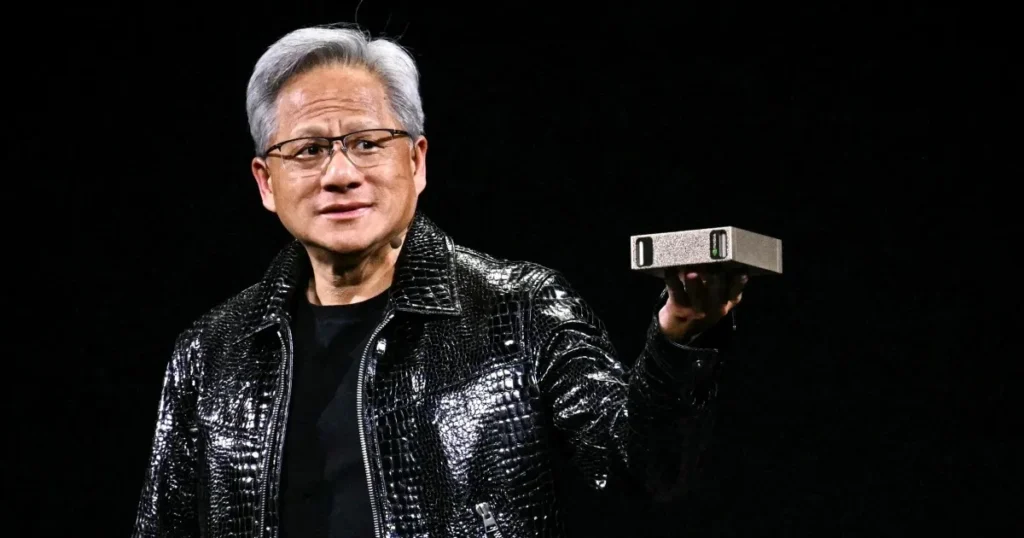

Jensen Huang (Jen-shun Huang) is the cofounder and long-time CEO of NVIDIA, the company whose GPU architecture and software stack became the dominant substrate for modern AI, especially large-scale neural networks and natural language processing (NLP) systems. Huang’s trajectory from early chip design work to leading a platform company that supplies hardware, compilers, libraries, and developer ecosystems reads like a case study in systems thinking: he didn’t just ship silicon he built the runtime, the toolchain and the community that made that silicon useful for researchers and engineers.

Viewed through an NLP lens, Huang’s work is analogous to delivering a fast tensor engine (the GPU) plus an efficient low-level runtime (CUDA/corn) and a thriving model training ecosystem (frameworks, libraries, cloud integrations). That stack reduced the “friction” between algorithm ideas and at-scale experiments, enabling larger models and faster iteration cycles. This pillar profile reframes his story in terms familiar to ML/NLP practitioners and leaders: the technical pivot points, the strategic architecture decisions, leadership lessons, timeline, FAQs, and a balanced assessment of risks and legacy as of 2025.

Quick facts

- Full name: Jen-hsun (Jensen) Huang

- Born: February 17, 1963 (age 62 in 2025)

- Birthplace: Taiwan (Tainan / Taipei region)

- Nationality: Taiwanese-American

- Known for: Co-founder & CEO of NVIDIA; leading GPU → AI compute evolution; championing CUDA and the developer ecosystem

Why this profile matters

If you want to understand how infrastructure choices shape what is feasible in NLP and AI, Jensen Huang’s leadership shows how hardware, system software, and developer ergonomics combine to determine model scale, iteration speed, and deployment patterns. Huang’s strategic bets investing in software layers, developer APIs, and partnerships with cloud and hyperscaler providers lowered the activation energy for large models and turned raw compute into an accessible tool for research and production. For anyone building ML infrastructure, this is a model of stack design and platform evangelism.

Childhood & early life concise

Born in Taiwan in 1963, Jensen Huang grew up in an environment that prized education and cross-cultural adaptability. His father worked as an engineer and his mother was a teacher; the family moved during his childhood (including time in Thailand), exposing him to multiple cultures and languages. A small family anecdote often shared: early English practice routines with his mother helped him later when communicating across cultures and institutions in the U.S. Those formative experiences contributed to both technical curiosity and the social fluency useful for leading a global company.

Education & formative influences

- Undergraduate: B.S., Electrical Engineering Oregon State University

- Graduate: M.S., Electrical Engineering Stanford University

At Stanford, Huang deepened his systems and semiconductor knowledge and built a professional network that later intersected with NVIDIA’s founding. For ML and NLP people, think of Huang’s education as his “foundational weights”: core engineering literacy and a network that enabled later transfer learning into entrepreneurship and platform building.

The founding of NVIDIA the Denny’s origin story

In 1993, Jensen Huang co-founded NVIDIA with Chris Malachowsky and Curtis Priem. The oft-told anecdote is that the three sketched ideas in a Denny’s restaurant in San Jose a humble origin story that belies the complex systems engineering they later delivered. Huang became CEO at inception and guided early product strategy: focus on 3D graphics acceleration for games and professional visualization, win OEM and board partners, and keep close alignment with developer toolchains. Those decisions created a focused product-market fit within the PC and workstation ecosystem that later allowed an architectural pivot to computing.

Early struggles and survival (1990s) bootstrapping a systems company

Like many hardware start-ups, early NVIDIA dealt with tight capital, manufacturing complexity, and intense competition. GPUs at the time were specialized processors for rendering; to scale, NVIDIA needed good silicon design, aggressive OEM relationships, and developer engagement. Huang’s early leadership emphasized engineering rigor, efficient cash allocation, and cultivating developer communities, the cultural primitives that later enabled CUDA and the computer pivot.

The GPU revolution how a gaming chip became an AI engine

What is a GPU and why it mattered

A GPU (graphics processing unit) is a highly parallel processor designed to execute many simple arithmetic operations simultaneously precisely the kind of compute pattern exploited by matrix multiplications and tensor contractions that underlie neural networks. In NLP models from RNNs to Transformers much of the heavy lifting is batched dense linear algebra (GEMM: GEneral Matrix-matrix Multiply). GPUs accelerate these primitives by offering thousands of cores, high memory bandwidth, and specialized units (e.g., tensor cores) optimized for mixed-precision matrix math. In NLP terms: GPUs are hardware accelerators for the forward and backward passes, lowered kernels, and fast batched inference.

The software moment: CUDA and lowering the abstraction gap

NVIDIA’s strategic inflection point was not only hardware but a software stack that made GPUs broadly programmable. CUDA (Compute Unified Device Architecture), released in the mid-2000s, provided a C/C++-like programming model and runtime that allowed developers to express parallel computations directly on GPUs. For ML researchers this meant being able to compile and run custom kernels, optimize memory layouts, and iterate faster. CUDA acted like a low-level ML runtime (the equivalent of a fast BLAS/cuBLAS for tensors), enabling libraries like cuDNN, cuBLAS, NCCL, and later higher-level bindings in frameworks like PyTorch and TensorFlow to build upon it. The net effect: the GPU transformed from a graphics specialist into a general-purpose tensor engine.

Key turning points

- 1993: NVIDIA founded.

- 1999: GPUs become mainstream for 3D graphics and gaming.

- 2006–2007: CUDA announced/released software made GPUs general-purpose compute devices.

- 2010s: NVIDIA expands into data centers, visualization, and automotive markets.

- 2020s: NVIDIA GPUs underpin major AI training and inference workloads; the company scales into AI infrastructure leadership.

Leadership style & strategy why Huang’s bets worked

Jensen Huang’s leadership mixes product-driven engineering, platform strategy, and storytelling each of which maps to well-known ML design and product principles.

- Engineering credibility: Huang speaks the technical language of chips, compilers, and systems similar to a lead ML engineer who can both design model architectures and diagnose training instability. That credibility speeds decision loops and builds trust with engineering teams and customers.

- Platform thinking: Rather than selling point products, Huang built a stack: hardware (silicon), runtime (CUDA), libraries (cuDNN/cuBLAS), and developer workflows (SDKs, tools, training programs). In ML terms, this is akin to offering a model zoo plus training pipelines and managed infra, which increases switching costs and yields network effects.

- Showmanship & product theater: NVIDIA’s events and Huang’s keynotes create narratives that frame products as transformational analogous to publishing a compelling benchmark or demo that shapes community perception of a new model.

- Patient R&D and capital allocation: Building custom silicon and compilers is capital intensive and requires multi-year roadmaps like investing in pretraining compute budgets and infrastructure before the research community fully demonstrates returns.

A practical table: Decision → Why it mattered → Outcome

| Decision | Why it mattered | Outcome |

| Invest in software (CUDA) | Made GPUs programmable for research & enterprise | Rapid adoption beyond gaming; strong developer ecosystem |

| Focus on developer ecosystem | Developers drive platform stickiness | Long-term competitive advantage and talent attraction |

| Partner with hyperscalers | Secured large orders and reference deployments | Massive data-center revenue growth |

| Strong public storytelling | Raised brand and perceived value | Premium valuation and influence in market |

Pros & Cons

Pros

- Long-term vision and platform building.

- Large developer base and ecosystem (CUDA, libraries).

- Strong technical credibility and engineering leadership.

Cons

- Product concentration: heavy exposure to GPUs and related AI compute.

- Geopolitical and supply chain sensitivity: semiconductors are globally entangled with trade policy.

- Competition: cloud providers and chip designers can design in-house accelerators and custom ASICs.

Net worth & 2025 financial snapshot

By 2025 Jensen Huang ranks among the world’s wealthiest executives. Most of his personal wealth is tied to NVIDIA equity. Public trackers (Forbes and similar) report valuations in the tens to over a hundred billion dollars depending on market levels. For live numbers, consult current billionaire trackers but the salient point for this profile is how Huang’s equity-heavy stake aligns his incentives with long-term company performance.

How GPU vs CPU compare

| Feature | GPU (NVIDIA focus) | CPU |

| Parallelism | Thousands of lightweight arithmetic cores for batched tensor ops | Few powerful cores optimized for sequential/branching logic |

| Best for | Dense linear algebra, matrix multiply, batched inference/training | Control plane, OS tasks, preprocessing, single-threaded workloads |

| Software | CUDA, cuDNN, NCCL, libraries & kernels | Compilers, OS APIs, general runtime |

| Latency vs Throughput | High throughput for large batches; lower per-op latency depends on kernel | Lower per-op latency for single tasks |

| Cost profile | Higher upfront for effective cluster use; amortized for large scale training | Cheaper for light compute and control tasks |

Major works & achievements

- Founding NVIDIA (1993): Co-founded and became the company’s long-time CEO.

- CUDA & software ecosystem (mid-2000s onward): Delivered a programmable path from graphics to general compute.

- Market leadership in AI compute (2020s): NVIDIA GPUs became widely used in training and inference across industry and academia.

- Industry honors & awards: Numerous recognitions, memberships, and honorary degrees.

Career journey

- Early career: Engineering positions at AMD and LSI Logic; gained semiconductor and systems experience.

1993: Co-founded NVIDIA; became CEO. - Late 1990s: Grew the gaming GPU market.

- 2006–2007: Launched CUDA, enabling GPU computation beyond graphics.

- 2010s–2020s: Expanded into data centers, visualization, automotive, and AI infrastructure.

Personal life

Huang met his wife, Lori, at Oregon State University. They have two children. Huang is comparatively private about family life but is known for philanthropic gifts to universities and institutions.

What Jensen Huang believes about the future of AI

Huang frames the AI build-out as still early: demand for compute will continue to grow as models become larger and as inference workloads expand across cloud and edge devices. He positions NVIDIA as the provider of accelerated computing across cloud, edge, and enterprise i.e., the low-level runtime and hardware substrate for the next generation of models.

Timeline long form (1963 → 2025)

- 1963: Born February 17 in Taiwan.

- 1980s: Undergraduate at Oregon State; M.S. at Stanford.

- 1993: NVIDIA founded (April).

- 1999: GPUs mainstream for 3D graphics.

- 2006–2007: CUDA launched.

- 2010s: Data center expansion, automotive collaborations, visualization products.

- 2020s: Central role in generative AI infrastructure and growth.

- 2025: NVIDIA remains a bellwether for computer demand.

Balanced view criticisms and responses

Common criticisms

- Overreliance on GPUs: Future compute architectures could diversify away from NVIDIA’s product line.

- Geopolitical exposure: Export controls and supply chain disruptions could hinder sales.

- Cloud providers designing custom accelerators: Hyperscalers could internalize parts of the stack.

Huang’s practical responses

- Deepening software and higher-level libraries to raise switching costs.

- Broad partnerships with cloud providers and OEMs to secure deployment channels.

- Investment in custom silicon, software ecosystems, and services to maintain differentiation.

FAQs

A: Jensen Huang is the cofounder, president and CEO of NVIDIA; he led the company from a gaming-GPU start-up to a central provider of AI compute.

A: Estimates vary by market price. Public trackers like Forbes list Huang in the tens of billions to over a hundred billion, depending on NVIDIA’s stock. Check Forbes for live numbers.

A: Turning the GPU into a programmable platform (via CUDA) so it could be used for general compute tasks, not just graphics.

A: He’s received industry honours, been named in TIME lists, and earned engineering awards and honorary degrees.

A: No, Cloud providers and other chip companies make or design accelerators. But NVIDIA’s software ecosystem and market share give it a strong advantage today.

Conclusion

Jensen Huang’s professional arc from early chip engineer to the CEO of a platform company central to AI infrastructure is a practical lesson in systems design, product evangelism, and patient engineering. Huang didn’t merely produce faster silicon; he orchestrated an ecosystem (runtime, libraries, partnerships, community) that lowered the barrier between research ideas and production deployments. For the NLP and ML communities that benefited from this stack, that meant larger models, faster experiments, and more real-world applications. The strengths of this approach are clear: platform lock-in, developer loyalty, and the capacity to monetize data-centre scale computation.

The risks are equally salient: competition from custom silicon, geopolitical constraints on supply and markets, and the need to continually innovate both hardware and software to keep pace with algorithmic advances. For founders, engineers, and product leaders, Huang’s story underscores the strategic value of building full stacks, staying fluent in technical detail, investing over long time horizons, and telling a compelling narrative about why the work matters. If published as a pillar article, this profile should be paired with primary source links and a timeline infographic to maximize credibility and shareability